According to Gartner, one in four job applicants will be fake by 2028. For remote-heavy teams, that future is already here: it's deepfake interviews, synthetic identities, and organized fraud rings targeting open roles. Some fraudulent applicants are collecting salaries while outsourcing the actual work. Others are stealing intellectual property or installing malware on company systems.

This guide breaks down who's faking it, how they do it, and how to catch them before they're on your payroll.

What are fake job applicants?

Fake job applicants are candidates who misrepresent their identity, qualifications, or intent during the hiring process. This ranges from resume embellishers who inflate their experience to fully synthetic identities created specifically to deceive employers.

The spectrum includes candidates who claim credentials they don't have, use someone else's identity or qualifications, employ proxies or AI to complete interviews on their behalf, submit plagiarized or AI-generated work samples, or deliberately conceal their true identity, location, or employment intent.

It's important to distinguish fake applicants from job scams targeting job seekers. Those scams involve fraudulent "employers" trying to steal money or personal information from candidates. Fake applicants represent the inverse: fraudulent candidates targeting legitimate employers to secure employment, collect salaries, steal data, or conduct corporate espionage.

The financial and security implications are significant. A fake hire can collect months of salary before detection, access sensitive company data and systems, install malware or backdoors for later exploitation, and steal intellectual property for competitors or foreign governments. The cost extends beyond salary. It includes productivity loss, security remediation, and potential regulatory violations if fraudulent employees access customer data.

Why fake applicants are surging

Three converging trends have created an explosion in fake job applicants:

AI tools make fabrication trivially easy. ChatGPT and similar models can generate convincing resumes, cover letters, and application responses in minutes. Deepfake software creates realistic video and audio for remote interviews, with lip-sync and facial expressions sophisticated enough to fool most human observers. Synthetic identity generators produce completely false personas, LinkedIn profiles, employment histories, and educational credentials that pass basic verification. Portfolio generators create work samples that appear authentic but are entirely AI-generated or plagiarized.

What once required significant technical expertise or criminal infrastructure now happens with consumer-grade tools. A fraudulent applicant can create a complete fake identity, apply to dozens of jobs, and conduct AI-assisted interviews, all from their laptop.

Remote hiring removes in-person verification. The shift to remote work eliminated one of the most basic fraud prevention mechanisms: meeting candidates face-to-face. Video interviews don't provide the same verification: cameras can be positioned to hide telltale signs, video can be deepfaked or pre-recorded, and interviewers can't verify the person's physical presence or ask them to complete on-the-spot tasks that would expose fraud.

Background checks that worked in an in-person era struggle with remote scenarios. Verifying someone's identity, credentials, and work history becomes significantly harder when you never meet them physically, and they're operating from locations where traditional verification methods don't work.

Financial incentives have grown significantly. Several economic factors make fake applications attractive:

Salary arbitrage: Individuals in low-cost countries secure remote US or European salaries, then outsource the actual work to even lower-cost contractors while pocketing the difference. In documented cases, fake employees collected six-figure salaries while paying others a fraction of that to do the work.

Data theft and corporate espionage: Foreign intelligence services and competitors plant fake employees to access intellectual property, customer data, product roadmaps, and strategic information. The value of stolen data often exceeds what the fake employee could earn in a legitimate salary.

Organized fraud operations: Criminal networks run multiple fake identities simultaneously across different companies, treating job fraud as a scalable revenue stream. These operations have the infrastructure to create synthetic identities, conduct interviews, and manage employment relationships for dozens of fake employees.

According to Department of Justice prosecutions, North Korean IT workers alone have infiltrated hundreds of US companies using fake identities, generating millions in revenue that flows back to the regime while potentially accessing sensitive systems and data.

Types of fake job applicants

Fake applicants range in sophistication. Understanding these tiers helps prioritize detection efforts:

Resume embellishers

These candidates inflate their actual experience rather than fabricating it entirely. Common tactics include inflated job titles (claiming "Senior" or "Lead" when they held junior roles), fabricated metrics ("increased revenue by 40%" with no verification), extended tenure (claiming 3 years at a company where they worked 6 months), and exaggerated responsibilities (describing tasks they observed others doing as their own work).

Resume embellishers represent the lowest level of sophistication but the highest volume. Many candidates engage in minor embellishment without considering it fraud. They often pass initial resume screening because the underlying experience is real, even if exaggerated.

The risk is moderate: These candidates may be less qualified than they claimed, leading to performance issues, but they're typically real people with some relevant background. Detection occurs during reference checks or when interview questions reveal they can't speak knowledgeably about their claimed accomplishments.

Credential fabricators

These applicants claim credentials they don't have: fake degrees from real or fictitious universities, fabricated professional certifications, plagiarized or AI-generated portfolio work, and invented past employment at companies where they never worked.

Credential fabrication has medium sophistication but is increasingly difficult to spot manually. AI can generate convincing certificates, transcripts, and even portfolio pieces. Some fabricators use real diploma mills, institutions that sell degrees without requiring coursework, making the credentials technically "real" but worthless.

The risk is higher than simple embellishment: These candidates may completely lack the foundational knowledge their credentials claim to certify. An engineer with a fabricated degree may not understand fundamental concepts. A designer with a plagiarized portfolio can't produce similar work when hired.

Detection requires active verification: contacting universities to confirm degrees, validating certifications with issuing bodies, and conducting technical assessments that test the skills and credentials claimed to represent.

Proxy and deepfake interviewees

In these scenarios, someone other than the actual applicant completes the interview, either a human proxy or an AI-generated deepfake. The person who shows up on Day 1 isn't the one who was interviewed.

Human proxy interviews involve a skilled interviewer completing the screening while the actual (less qualified) person starts the job. Deepfake interviews use AI to generate realistic video and audio of a fictional candidate, with someone controlling responses in real-time or using pre-recorded segments.

This high-sophistication fraud came to public attention when KnowBe4, a cybersecurity company, inadvertently hired a fake IT worker who used a stolen identity and had someone else complete video interviews. The fraud was only discovered after the person attempted to load malware onto company systems on their first day.

Detection signals during interviews include lip-sync mismatches between audio and video, lighting inconsistencies suggesting composite video, unnatural eye contact or facial expressions, reluctance to turn on the camera or perform unexpected actions, and background noise suggesting someone coaching responses.

The risk is severe: you're hiring someone whose capabilities are completely unknown, who may be working for competitors or foreign actors, and who gained access under entirely false pretenses.

Organized fraud rings

The most sophisticated tier involves state-sponsored actors and criminal networks running multiple fake identities simultaneously. North Korean IT workers represent the most documented example: IT professionals operating under false identities to generate revenue for the regime while potentially conducting espionage or installing malware.

These operations have infrastructure: teams that create synthetic identities, "laptop farms" where multiple fake employees' computers are centrally managed, money-laundering networks to receive salaries, and coordinators who manage interviews and onboarding for fake employees.

The Department of Justice has prosecuted numerous cases of North Korean nationals operating fake identities at US technology companies. In one case, workers at over 300 companies were traced back to organized fraud operations. These were coordinated networks with support infrastructure.

Detection is extremely difficult because these operations use sophisticated tradecraft: real US-based addresses (mail forwarding services), legitimate-seeming work histories, plausible interview performances, and often some ability to perform the job (at least initially). The goal is prolonged access to systems and data.

Red flags include reluctance to meet in person or appear on company premises, device and IP anomalies suggesting access from unexpected locations, attempts to access systems or data beyond job requirements, and patterns across multiple hires suggesting coordinated operation.

How to spot fake job applicants

Effective detection requires a stage-by-stage approach, looking for different signals at each point in the hiring process:

Application stage red flags

Email and domain analysis: Newly created email addresses (domain age under 6 months), disposable email domains commonly used for temporary accounts, and email patterns suggesting bulk account creation (sequential numbers, similar naming patterns).

LinkedIn profile inconsistencies: Profiles created recently but claiming years of experience, sparse connections inconsistent with claimed career level, employment history that doesn't match the resume, skills endorsements from accounts that also appear fake, and profile photo reverse image search showing it's stock photography or someone else's photo.

Resume metadata signals: Documents created very recently despite claiming years of experience, editing patterns suggesting template use or AI generation, formatting that's either too perfect (suggesting automation) or contains telltale signs like unusual fonts or hidden text, and content that mirrors job descriptions with suspicious precision.

Application velocity anomalies: The same person applying from multiple geographic locations in short timeframes, IP addresses that don't match the claimed location, and device fingerprints showing the same device submitting applications under different identities.

Modern fraud detection tools automatically analyze these signals, flagging applications with multiple risk indicators for human review before they reach the interview stage.

Screening and interview red flags

Video interview technical signals: Lip-sync mismatches where audio and mouth movements don't align perfectly, lighting inconsistencies suggesting composite or AI-generated video, unnatural eye contact or facial expressions with robotic quality, unusual backgrounds that seem artificially generated, and reluctance to turn on camera or refusal to perform unexpected actions ("Can you hold up a piece of paper with today's date?").

Audio cues suggesting fraud: Background noise indicating someone coaching responses off-camera, unnatural pauses before answers suggesting AI processing time or proxy communication delay, responses that sound scripted or overly polished, and inability to engage in natural back-and-forth conversation.

Skills mismatch indicators: Inability to discuss specific details about claimed accomplishments, lack of depth when probed on technical topics their resume claims expertise in, responses that sound like AI-generated summaries rather than personal experience, and a significant disconnect between the impressive resume and the unusual interview performance.

Behavioral signals: Extreme nervousness beyond normal interview anxiety, reluctance to answer straightforward questions about their background, evasiveness about why they left previous roles or employment gaps, and inconsistencies between different interview rounds or interviewers.

Post-interview verification red flags

Reference check anomalies: References who can't be reached at the company they supposedly work for; reference phone numbers that are VoIP or virtual numbers rather than company lines; references who provide generic praise but can't discuss specific projects or work quality; and employment verification that doesn't match the claimed dates or titles.

Employment history inconsistencies: LinkedIn employment dates that don't match the resume or application, companies that can't confirm the person ever worked there, and gaps in employment history with suspicious explanations.

Device and IP signals during assessments: Assessment completions from IP addresses in different countries than the claimed location, device fingerprints suggesting use of virtual machines or remote access tools, browser configurations inconsistent with the claimed operating system or device, and assessment performance that contradicts interview evaluation.

Assessment result patterns: Work samples that are plagiarized or AI-generated, technical assessment scores that don't align with interview performance, and inconsistencies between timed assessments (where they struggle) and take-home work (suspiciously perfect).

Building a fraud applicant detection process

Moving from reactive fraud discovery to systematic prevention requires building detection into your hiring workflow:

Automate multi-signal screening at application intake. Implement fraud detection tools that analyze applications across multiple risk dimensions: email and domain verification, LinkedIn profile analysis, device and IP tracking, resume content analysis for AI generation patterns, and comparison against known fraud patterns. Flag high-risk applications for human review before investing recruiter time in screening.

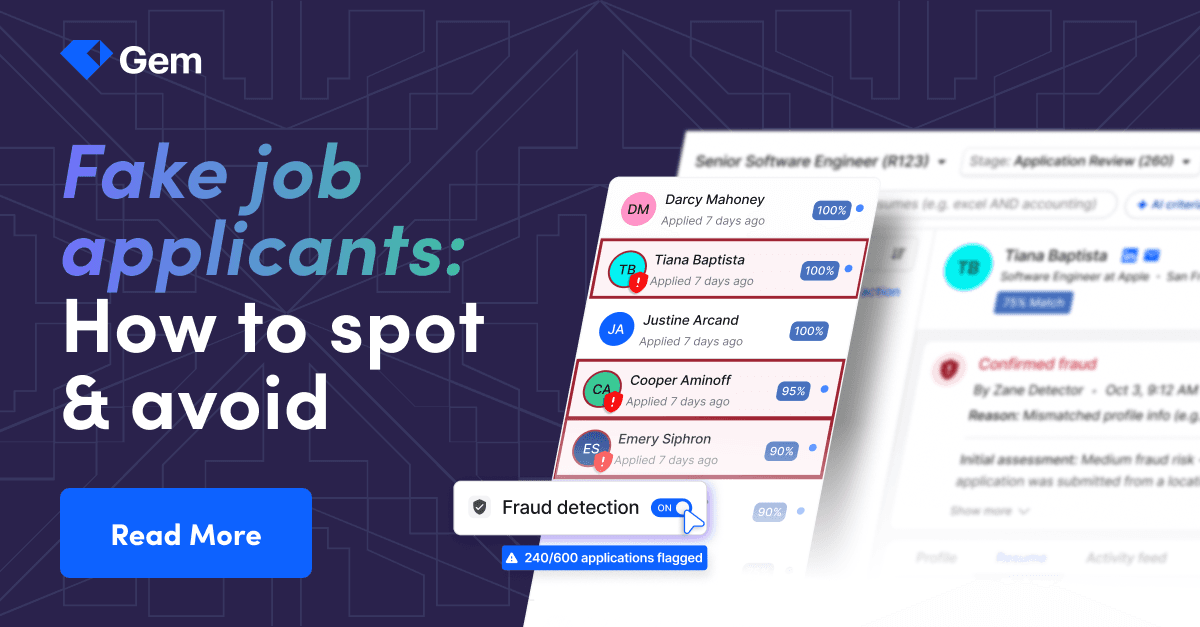

Integrated solutions like Gem's Fraud Detection Agent automatically evaluate applications using multiple signals, including resume metadata, email, phone, LinkedIn, person verification, IP, and device data. Unlike competitors that just flag individual signals without context, Gem evaluates multiple signals together and assigns a clear risk level (high, medium, low) with 90%+ accuracy.

Powered by Tofu, a specialized fraud detection company that has analyzed 5M+ applicants using billions of data points, the agent runs automatically in the background with zero added friction for recruiters or candidates.

Real-world impact is significant: Daxko saw 14-28% of applicants flagged as fraudulent across active remote tech roles and cut fraud-check costs by 89% after switching to Gem. OneSignal found that on one open tech role, 88% of applicants were flagged high risk, reclaiming 14+ hours per role after eliminating manual review. As Julia Graham, Senior Technical Recruiter at OneSignal, noted: "I could not talk to another fake candidate. It's draining. Now I'm actually spending my time on people who are real."

Layer AI detection on top of human review. Use AI to process high volumes and flag anomalies, but maintain human judgment for final decisions. AI can identify patterns humans miss (IP anomalies, metadata signals, cross-application patterns), while humans catch contextual red flags AI overlooks (interview demeanor, response quality, subtle inconsistencies).

The combination is more effective than either alone: AI provides scalable screening across all applications, and human review focuses on candidates who passed initial screening but still show concerning signals. Recruiters see clear explanations for why applications are flagged and stay in control. They make the final decision on every candidate.

Implement verification checkpoints at stage transitions. Don't wait until after the offer to verify credentials and employment.

Build verification into the process:

After initial screening: Verify LinkedIn profile matches resume, check email domain legitimacy

Before the first interview: Confirm candidate's identity through video verification, requiring specific actions

After technical screening: Verify claimed credentials with issuing institutions

Before final interviews: Complete employment verification for recent roles

Pre-offer: Comprehensive background check, including identity verification

Early-stage verification catches fraud before you've invested significant time from recruiters and hiring managers. Discovering fraud at the offer stage means you've wasted weeks and need to restart the hiring process.

Train recruiters on AI-era red flags. Many recruiters were trained to spot resume embellishment but not sophisticated fraud involving deepfakes, synthetic identities, or organized operations. Update training to cover modern fraud patterns, interview techniques that expose proxies or AI assistance, verification processes, and when to escalate concerns, and legal considerations around fraud detection and documentation.

Document and escalate suspicious patterns. Build an internal fraud playbook that documents what constitutes suspicious activity, who should be notified when fraud is suspected, how to preserve evidence without alerting the candidate, when to involve legal or law enforcement, and what information to share with other companies (through appropriate channels).

If you discover an organized fraud pattern, multiple fake applicants from the same operation, reporting to law enforcement helps protect other companies and may uncover broader criminal or state-sponsored activity.

Continuously update detection as fraud evolves. Fraudulent techniques adapt quickly as each detection method becomes known. Review your fraud detection quarterly: what new patterns are emerging, which signals stopped being reliable, which tools or processes need updating, and what other companies in your industry are seeing.

Fake applicants a resume-screening problem and a systemic risk that requires automated detection, human judgment, and continuous process improvement. Fraud is already showing up on engineering, finance, and remote-first roles at alarming rates. Gem's AI Fraud Detection Agent catches fraudulent applicants automatically before they waste interview time or escalate to Security, helping recruiters focus on building relationships with real candidates.

FAQ

How common are fake job applicants?

Fake job applicants are increasingly common and projected to surge dramatically. Gartner predicts that by 2028, one in four job applicants will be fake, up from current estimates of 5-15% depending on industry and role type.

However, "fake" exists on a spectrum. If you include all forms of misrepresentation (minor resume embellishment, inflated titles, exaggerated metrics), the percentage is much higher, some studies suggest over 50% of resumes contain some inaccuracy. If you focus on serious fraud (fake credentials, proxy interviews, synthetic identities), current estimates range from 2% to 8% of applications.

Remote-first companies and those hiring for fully remote roles report higher rates of fraudulent applications than those hiring for in-person roles. The percentage varies significantly by geography: companies that hire internationally see higher fraud rates than those that hire only domestically, and by role level: entry- and mid-level positions are more targeted than executive roles, where verification tends to be more thorough. From Gem's early users, we saw up to 30% flagged applicants (for Daxko on critical roles), and on the extreme end, 88% of flagged applicants on a remote technical role (OneSignal).

What industries are most targeted by fake applicants?

Technology companies face the highest rates of fake applicants, particularly when hiring for software engineering, data science, cybersecurity, and IT infrastructure roles. The combination of high salaries, widespread remote work, and hard-to-verify technical skills makes tech especially attractive to fraudsters.

Financial services companies are heavily targeted because roles provide access to sensitive financial data and systems, fraud rings can potentially access customer accounts or transaction systems, and positions often involve privileged system access. Banks, fintech companies, and payment processors report significant fraud attempts.

Healthcare and pharmaceutical companies attract fake applicants seeking access to patient data, drug development IP, and research findings. Medical credentialing fraud is particularly concerning because fake healthcare workers can endanger patients.

Defense contractors and companies with government contracts face state-sponsored fake applicants attempting to access classified information, steal defense technology, or compromise secure systems. North Korean IT worker fraud operations specifically target these sectors.

Remote-first startups, regardless of industry, see elevated fraud because they lack physical offices where identity can be verified, often have less mature hiring processes, may not have robust background check procedures, and the startup environment sometimes prioritizes speed over thorough verification.

Can AI detect fake job applicants?

Yes, AI can detect many types of fake applicants, often more effectively than manual review for high-volume screening. AI fraud detection analyzes patterns across multiple signals that humans would struggle to track: IP addresses and device fingerprints across applications, email domain age and legitimacy indicators, LinkedIn profile consistency with application materials, resume metadata suggesting AI generation, application velocity anomalies, and cross-applicant patterns suggesting coordinated fraud.

Gem's AI Fraud Detection Agent evaluates applications using multiple signals at once—resume metadata, email, phone, LinkedIn, person verification, IP, and device data—and assigns a clear risk level (high, medium, low) with 90%+ accuracy. Powered by Tofu, which has analyzed 5M+ applicants using billions of data points, the system provides a proven fraud-detection methodology rather than simple signal flagging.

The most effective approach combines AI and human judgment: AI handles scale, screening thousands of applications for anomalies and flagging high-risk candidates for human review; humans provide context, catching subtle red flags during interviews that AI misses and making final judgment calls on ambiguous cases. Recruiters stay in control with full transparency into why applications are flagged, while AI handles the heavy lifting of multi-signal analysis, leaving the final decision to them.

What should I do if I discover a fake applicant?

If you discover a fake applicant during the hiring process, immediately halt their candidacy and document all evidence, including resume, application materials, interview recordings if available, email communications, LinkedIn profile screenshots, IP addresses and device information from your applicant tracking system, and specific inconsistencies or red flags you identified.

Do not confront the candidate directly or alert them that you've discovered fraud—this allows them to delete evidence or warn others in potential fraud networks. Simply send a standard rejection without explanation.

Report the fraud to your internal security team if you have one, especially if the fake applicant progressed far enough to access any company systems, information, or communications. Your legal department should be notified if there are potential legal implications or if you're considering involving law enforcement.

Consider reporting to law enforcement if the fraud appears sophisticated or part of organized activity (FBI handles cybercrime and fraud with interstate or international elements), the applicant may be part of state-sponsored operations (FBI Counterintelligence Division), or you've discovered a pattern affecting multiple companies (coordinate through industry groups or law enforcement).

If you discover a fake applicant after they've been hired, the situation is more serious: immediately revoke all system access and credentials, conduct a security review to determine what systems and data they accessed, preserve evidence, including work product, communications, and access logs, involve legal counsel before any confrontation, and prepare for potential legal action if data was stolen or systems compromised.

Share information (appropriately) with other companies through industry security groups, professional networks, or platforms designed for sharing threat intelligence—but consult legal first to ensure you're not violating any privacy laws or defamation concerns.

Update your fraud detection processes based on what you learned: How did this fake applicant get through your screening? What signals did you miss that you could catch in the future? Do you need better verification checkpoints or tools? Treat each fraud discovery as a learning opportunity to strengthen your defenses against future attempts.

Share

Your resource for all-things recruiting

Looking for the latest data, insights, and best practices? Welcome to the Gem blog. We've got you covered.